Puppet

Puppet is the main configuration management tool to be used on the Wikimedia clusters. For some additional insight the Wikimedia article is a good introduction.

The Puppet agent is the client which runs on all our servers (triggered every 30 minutes by a systemd timer) and it manages the configuration of these machines with configuration data pulled from the Puppet servers.

Making changes

A short tutorial on how to get set up to propose and merge changes in Puppet can be found here: Adding_users_on_puppet

Updating operations/puppet

For security purposes, changes merged into the puppet git repository are not immediately applied to nodes. After merging in Gerrit someone with SRE-level access needs to review the changes one last time on one of the Puppet servers. This final check is crucial to making sure that malicious puppet changes don't sneak their way in, as well as making sure that you don't deploy something that wasn't ready to be deployed. puppet-merge is the custom wrapper script designed to formalize the merge steps while making it possible to review actual diffs.

The checkout of the operations/puppet repository is hosted on the Puppet servers in /srv/git/operations/puppet. Only one server is the designated host for merging the changes (to ensure that the merging of changes in free of race conditions); this server is currently puppetserver1001.eqiad.wmnet (it is configured via the Hiera variable puppet_merge_server).

Pending changes

If you notice some changes that are not yours in the diff, reach out on IRC to #wikimedia-sre to see if these changes are safe to merge.

If you are not sure, do not merge.

Additional notes

One might notice that two puppet-merge wrappers exist: A Bash-based one and a Python-based one. The Bash wrapper is intended for use by a real user while the Python wrapper is for automation (e.g. via the git-puppet user). Eventually, only the Python wrapper will exist.

Updating labs/private.git

By default this is not necessary. When puppet-merge is invoked by a user, and --labsprivate is not specified, it will check for labs/private changes and merge them if necessary.

If you wish to only merge labs/private changes in cloud VMs, invoke the script with the --labsprivate flag.

user@puppetmaster1001:~ $ sudo puppet-merge --labsprivate

Fetching new commits from https://gerrit.wikimedia.org/r/labs/private

remote: Counting objects: 1746, done

remote: Finding sources: 100% (40/40)

remote: Getting sizes: 100% (8/8)

remote: Total 40 (delta 26), reused 40 (delta 26)

Unpacking objects: 100% (40/40), done.

From https://gerrit.wikimedia.org/r/labs/private

3c06697..1c2f71a master -> origin/master

[..]

Noop test run on a node

You can do a dry run of your changes using:

# puppet agent --noop --test --debug

This will give you (among other things) a list of all the changes it would make.

Trigger a run on a node

Just run:

# run-puppet-agent

Puppet agent

Installation of the puppet service is handled via our automated installation. No production ready machines should have puppet manually installed.

If you're not following the Server Lifecycle/Reimage procedure (STRONGLY DISCOURAGED), initial root login can be done from any puppetmaster frontend or cumin master hosts (cumin1002.eqiad.wmnet, cumin2002.codfw.wmnet) with sudo install-console HOSTNAME. The script uses /root/.ssh/new_install ssh key and thus works also while debian-installer is running during PXE install.

The key is automatically removed at the first puppet run.

Communication with the puppetmaster server is over encrypted SSL and with signed certificates. To sign the certificate of the newly installed machine on the puppetmaster server, log in on the current ca_server (to find out: sudo puppet config print --section agent ca_server) and run:

puppet cert sign clienthostname

To check the list of outstanding, unsigned certificates, use:

puppet cert list

You can also do this via cookbook.

Reinstalls

When a server gets reinstalled, the existing certs/keys on the puppetmaster will not match the freshly generated keys on the client, and puppet will not work. Our automated reimaging script wmf-auto-reimage(-host) should be used in every case.

The manual steps would be as follow:

- Before a server runs puppet for the first time (again), on the puppetmaster host, the following command should be run to erase all history of a server:

puppet node clean clientfqdn

However, if this is done after puppet agent has already run and therefore has already generated new keys, this is not sufficient. To fix this situation on the !!! client !!!, use the following command to erase the newly generated keys/certificates:

find /var/lib/puppet -name "$(hostname -f)*" -exec rm -f {} \;

Forcing a Puppet run against a specific server

By default the Puppet agent resolves an adequate puppet server via DNS service records. To run the agent explicitly against a specific server, this needs to be disabled:

sudo puppet agent --verbose --onetime --no-daemonize --show_diff --no-splay --server puppetserver2003.codfw.wmnet --no-use_srv_records

SANs for puppet certs

If you want to add SANs to your puppet certificate, you can do that with the following:

- Make sure that puppet is already successfully running on the instance whose certificates need to have SANs added

- Set the

profile::base::puppet::dns_alt_nameshiera key to a comma-separated list of domains you'd like to be in the SAN. See puppetlabs's site for docs. - Run puppet a couple of times to make sure that /etc/puppet/puppet.conf has a line under [agent] setting dns_alt_names

- On the puppetmaster, revoke the current certificate for the host with puppet cert clean <fqdn-of-host>

- On the client, clean out all the certs with rm -rf /var/lib/puppet/ssl

- Run puppet agent -tv on the client to regenerate a certificate and submit it to the puppetmaster for signing. This CSR will have the SANs you specified in step 2 in it.

- On the server, sign the CSR with puppet cert --allow-dns-alt-names sign <fqdn-of-host>.

Done!

Misc

Sometimes you want to purge info for a host from the puppet db. The below will do it for you:

puppet node clean fqdn

on the puppet master. All references, i.e. the host entry and all facts going with it, will be tossed. It is important to note that the ssl certificate will be tossed as well, so you will need to re-generate and sign a new cert after the fact.

Maintenance

When performing some maintenance on a host that requires puppet to be disabled, it can be done with (the current $SUDO_USER will be automatically appended to the message):

sudo disable-puppet "some reason - T12345"

To re-enable it run (the current $SUDO_USER will be automatically appended to the message):

sudo enable-puppet "some reason - T12345"

To re-enable it and contextually run puppet:

sudo run-puppet-agent -e "some reason - T12345"

Facter

facter is a tool that collects and reports data about a puppet node.

It reports some data by default, like cpu information, uptime, ip addresses, but it can also return custom facts. Custom facts are ruby scripts used to return more information, like the raid management tool used on a server.

Unlike hiera which is used for declarative input, facter is used as system information input for puppet.

sudo facter -p

facter -p networking.ip

sudo facter -p raid_mgmt_tools

Puppetmaster

As of late 2016 we have a 3-layer infrastructure for our puppet masters:

- Each main datacenter has its own 3-layer infrastructure, linked with the one in the other.

- The first layer is the puppetmaster frontend, running on one machine per DC. It runs on port 8140 and only accepts connections via HTTPS, and proxies them to backends for everything besides static content. Some specific requests like certificate signing requests and requests for volatile data will be redirected to the local backend (see below) if the server is the current designated master. If it's not, requests are proxied to the frontend on the current master.

- The second layer are the puppetmaster backends, which are listening on port 8141 and running the puppetmaster application via apache/mod_passenger. One instance of the backend is also installed on the frontend servers. This is what does most of the server-side work.

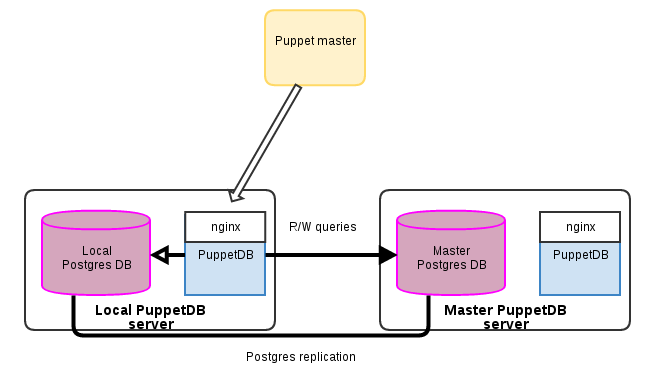

- The third layer is puppetDB, where the backend application will both store agent-provided facts, compiled catalogs and resources; it can also be queried by the masters in order to fetch information (from e.g. exported resources, and more) about the nodes. The puppetDB architecture is pretty complex in itself, so it is explained in more detail below.

PuppetDB

PuppetDB is a clojure application that exposes a somewhat-RESTful interface to retrieve information about puppet catalog, resources, facts. At the time of writing, we're using puppetDB version 2.3, which is the last to be compatible with puppet 3.x. PuppetDB uses Postgres to store its data.

A web frontend for PuppetDB is available at puppetboard.wikimedia.org

Our PuppetDB infrastructure is built for scaling-out and high availability as follows:

- Each datacenter has one puppetdb server, that at the moment hosts both the clojure application and the Postgres server.

- Queries from the puppetmasters in one datacenter normally flow to the local puppetdb application

- Read-only queries go to the local postgres server; writes are done by connecting (over SSL) to whichever postgres instance is the master.

- Postgres instances are set up in a primary/read-only replica configuration

Debugging

It might at times be useful to see the queries being served by puppetdb. That can be done by inspecting the HTTP requests received by puppetdb on, for instance, puppetdb1001.eqiad.wmnet:

sudo httpry 'tcp port 8080' -i lo -m GET [...] 2018-04-10 10:59:58 127.0.0.1 127.0.0.1 > GET localhost:8080 /pdb/query/v4/resources?query=[%22and%22,[%22=%22,%22type%22,%22Class%22],[%22=%22,%22title%22,%22Profile::Cumin::Target%22],[%22=%22,%22exported%22,false]] HTTP/1.0 - -

To get the result of the query above, replacing %22 with ":

curl -G localhost:8080/pdb/query/v4/resources --data-urlencode 'query=["and",["=","type","Class"],["=","title","Profile::Cumin::Target"],["=","exported",false]]'

check_puppet_run_changes

This check queries the puppet db to find hosts that perform a change on every puppet run. When the check alerts it should show you a list of all hosts detected. The quickest way to see the failures is to go to the node's page in Puppetboard (https://puppetboard.wikimedia.org/node/$FQDN) and check the list of last Puppet runs and look at the changes on each of them clicking on the changed blue button. The other way is log in to the machine and manually run puppet a few times to see which resource(s) is trying to make a change on every puppet run. Some common issues that have been observed are:

- Attempting to absent a directory without using

force => true - Installing a package via its alias.

- Service is started by puppet but silently fails shortly after

node cleanup

In case there are stale entries in puppetdb for servers that no longer exists, you can cleanup the node using these commands in the puppetmaster:

user@puppetmaster1001:~ $ sudo puppet node clean $FQDN

[...]

user@puppetmaster1001:~ $ sudo puppet node deactivate $FQDN

Submitted 'deactivate node' for $FQDN with UUID 3064c051-5435-xxxx-xxxx-89e5e8359e7c

If what needs cleanup is on client side only (for example, if a reimage was aborted after the certificate has been revoked already), you can do:

user@client_node2002:~ $ sudo find /var/lib/puppet/ssl -name $FQDN.pem -delete

And on next run a new certificate will be automatically generated.

Micro Service

puppetdb runs a small micros service which exposes a limited set of the puppetdb DB API to trusted hosts. This service is configured with a dns discovery which points to both the codfw and eqiad puppetdb servers and as such can be depooled using conftool.

The service is currently used by:

- netbox

- pki

- cuminpriv

Operations

Pool / depool a backend

Puppet master backends are controlled by hieradata/common/puppetmaster.yaml, marking a given backend as offline by adding offline: true to the entry will depool it from apache from the frontends. Removing the worker entry altogether will also remove said backend from e.g. firewall rules so make sure a backend is not receiving traffic before removing the entry. Removing the entry will also prevent the backend from getting puppet-merge updates pushed, so useful when e.g. a backend is expected to be offline for long periods of time.

When pooling a new backend, the recommended procedure is to first add the entry with offline: true to allow the backend to e.g. receive updates from puppet-merge, be granted access to puppetdb, etc. After verifying the backend is working as expected and puppet has ran, remove the offline: true entry.

Pool / depool a frontend

Puppetmaster frontends are found by agents via the (unqualified) puppet DNS name, thus there's different records for each site under wmnet plus wikimedia.org.

To pool a frontend into service it is sufficient to change one/more DNS CNAMEs for puppet to point to it (e.g. 421060).

Puppetmaster frontends also serve etcd configuration pulled from etcd itself, via the config-master virtualhost. To pool a frontend for config-master it should be present both in DNS and varnish: 421918 and 421919 respectively.

Usually these activities of pooling/depooling are related to reimages, see this Phabricator task with a detailed description of such reimage.

Debugging

Using

# puppet agent --test --trace --debug

You get maximum output from puppet.

You can see a list of classes that are being included on a given puppet host, by checking the file /var/lib/puppet/state/classes.txt.

With --evaltrace, puppet will shows the resources as they are being evaluated:

# puppet agent -tv --evaltrace info: Class[Apt::Update]: Starting to evaluate the resource info: Class[Apt::Update]: Evaluated in 0.00 seconds info: /Stage[first]/Apt::Update/Exec[/usr/bin/apt-get update]: Starting to evaluate the resource notice: /Stage[first]/Apt::Update/Exec[/usr/bin/apt-get update]/returns: executed successfully info: /Stage[first]/Apt::Update/Exec[/usr/bin/apt-get update]: Evaluated in 16.24 seconds info: Class[Apt::Update]: Starting to evaluate the resource info: Class[Apt::Update]: Evaluated in 0.01 seconds ...

Most of the puppet configuration parameters can be passed as long options (aka evaltrace can be passed as --evaltrace).

pdb-changes - Audit changes during a specific time window

Sometimes its usefull to get a list of all resources that changed during a specific time window. this could be useful for

- incident analysis - see what changed just before an incident

- Fixing a bad puppet changed - sometimes changes cant be fixed by a simple roll back as such it can be useful to see what was unintentionally affected by a specific change

- audit change roll out - PCC is great but there are still some things it dosn't capture e.g.

execresources.

The script is installed on the puppet masters and can be run as follow

# basic query with various verbosity levels

$ sudo pdb-changes '2 mins ago'

11:06:03: idp-test1002.wikimedia.org - (705ea23edf) jelto - gitlab runner: allow golang:* images

$ sudo pdb-changes '2 mins ago' -v

11:06:03: idp-test1002.wikimedia.org - (705ea23edf) jelto - gitlab runner: allow golang:* images

Exec[git_pull_operations/software/cas-overlay-template]: (returns)

$ sudo pdb-changes '3 mins ago' -vv

11:06:03: idp-test1002.wikimedia.org - (705ea23edf) jelto - gitlab runner: allow golang:* images

Exec[git_pull_operations/software/cas-overlay-template]: (returns)

--notrun

++["0"]

# look for events in the past

$ sudo pdb-changes --end-date '55 mins ago' '1 hour ago'

10:12:11: ncredir6002.drmrs.wmnet - (b3397a7f1d) Jelto - gitlab_runner: make allowed_images list configurable in hiera

10:12:09: ncredir4002.ulsfo.wmnet - (b3397a7f1d) Jelto - gitlab_runner: make allowed_images list configurable in hiera

10:13:30: ncredir5001.eqsin.wmnet - (b3397a7f1d) Jelto - gitlab_runner: make allowed_images list configurable in hiera

10:13:14: releases1002.eqiad.wmnet - (b3397a7f1d) Jelto - gitlab_runner: make allowed_images list configurable in hiera

10:13:29: elastic2079.codfw.wmnet - (b3397a7f1d) Jelto - gitlab_runner: make allowed_images list configurable in hiera

10:14:46: ncredir4001.ulsfo.wmnet - (3230cda983) jbond - hiera: add default for profile::docker::builder::known_uid_mappings

10:16:11: ncredir5002.eqsin.wmnet - (3230cda983) jbond - hiera: add default for profile::docker::builder::known_uid_mappings

# filter for specific host

$ sudo pdb-changes --end-date '55 mins ago' '1 hour ago' --node-regex 'elastic\\d+'

10:13:29: elastic2079.codfw.wmnet - (b3397a7f1d) Jelto - gitlab_runner: make allowed_images list configurable in hiera

Also see P35491 for more example output

Errors

Occassionally you may see puppet fill up disks, and then result in yaml errors during puppet runs. If so, you can run the following on the puppet master, but do so very, very carefully:

cd /var/lib/puppet

find . -name "*<servername>*.yaml"

# if safe

find . -name "*<servername>*.yaml" -delete

Check .erb template syntax

ERB files are easy to syntax check. For a file mytemplate.erb, run

erb -x -T '-' mytemplate.erb | ruby -c

Audit resources via puppetdb

Please see the PQL page for more examples

From time to time it is useful to run audits on resource usage (classes, defines, etc) across the codebase in production. To this end, you can ask puppetdb from Python, for example to list all Systemd::Timer::Job resources and their monitoring_contact_groups parameter:

from pypuppetdb import connect

db = connect()

pql = """

resources{

type = 'Systemd::Timer::Job' and

parameters.monitoring_enabled = true

}

"""

resources = db.pql(pql)

for resource in resources:

print(f'{resource} ({resource.parameters["monitoring_contact_groups"]})')

Icinga alerts

check_client_bucket_large_file

This check fires if large files have been found in the client bucket directory (/var/lib/puppet/clientbucket/). Files are copied to this directory when they are being managed in some way by puppet. puppet uses the copy to disable diffs as well as a backup (not to be relied upon). Puppet is not great at managing large files in general but especially if it needs to disable. As such we should avoid managing large files with puppet at all. however if you really do need to you should:

- place the file in the volatile directory directly on the puppetmaster and not store them

- ensure you set

backup => falseon the file resource

you should be able find the large files using the following one liner:

$ find /var/lib/puppet/clientbucket -type f -size +100M | while read line ; do cat "$(dirname ${line})"/paths ; done | uniq

Troubleshooting

puppet master spewing 500s

It might happen that there's a storm of puppet failures, this is usually due to the clients not being able to talk to the master(s). If that happens first identify the failing puppet master, there should be a nagios check on HTTP checking for 200s. Once on the puppet master check that apache children are present, in particular the mod_passenger's passenger-spawn-server and that there "master" processes running, the stdout/stderr are connected to /var/log/apache2/error.log so that will provide some guidance, if e.g. passenger-spawn-server crashed it would be sufficient to restart apache.

puppet-merge fails to sync on secondary

Sometimes puppet-merge might fail to sync on the secondary for whatever reason (see also https://phabricator.wikimedia.org/T128895). This is easily fixed by ssh into the server where the command failed and running:

sudo puppet-merge

force puppet agent to use a specific puppetmaster

If you have multiple puppet masters, maybe using different versions, you may want to force an agent to use a specific master.

See this example using boron and a test puppetmaster:

root@boron:~# puppet agent --test --server puppetmaster.test.eqiad.wmnet

Where boron has in /etc/hosts the puppetmaster frontend (puppetmaster1001):

10.64.16.73 puppetmaster.test.eqiad.wmnet

And the puppetmaster frontend itself has the server you'd like to test in its hiera settings as profile::puppetmaster::frontend::test_servers

Git is down (and requires a puppet change to put it back)

//TODO

Volatile mount

The volatile mount is used to store files that are not subtitle for storage in git most often because they are too large or because they are generated via some cron script.

files should be added to the puppet ca host. they are then rsynced from the other puppetmasteres via a systemd::timer::job (sync-puppet-volatile.timer).

Currently the volatile partition hosts the following files

- external_cloud_vendors: a json file listing public cloud networks generated using

external_clouds_vendorspuppet class - GeoIP, GeoIPInfo: the puppet module geoip installs theses files

- ip_reputation_vendors: a json file listing ip reputations specifically proxy ips generated using

ip_reputation_vendorspuppet class - swift: This is used to distribute swift rings. the directory is populated via profile::puppetmaster::fetch_swift_rings

- tftpboot: this hosts the debian installer images used to install servers theses are updated manually created mostly via update-netboot-image script

Private puppet

Our main puppet repo is publicly visible and accepts (via gerrit review) volunteer submissions. Certain information (passwords, keys, etc.) cannot be made public, and lives in a separate, private puppet repository.

The private repository is stored on puppetserverXXXX server hosts in /srv/git/private. Before T368023, it was hosted on puppetmaster1001 but now it is hosted on puppetserver1001. Technically any puppetserverXXXX host can be used to push private updates, but it is recommended to only use puppetserver1001. The puppetmasterXXXX nodes will be kept only until the Puppet 5 Infrastructure is needed (see T365798 for more info about its deprecation plan).

It is not managed by gerrit or subject to review; changes are made there by logging in, editing and committing directly on the host itself (preferred one being puppetserver1001). Changes to /srv/git/private are distributed to puppetmasters and puppetservers automatically via a post-commit hook.

The puppet master pulls private data from /var/lib/git/operations/private but you don't need to edit there, it should be synced automatically by the post-commit hook in /srv/private.

On puppetserver nodes, the data is pulled from /srv/git/operations/private (and automatically synced from /srv/git/private).

To properly attribute a change to a user, commit under your own user with sudo, e.g. "sudo git commit -a" or use a "sudo -i bash" shell.

The data in the private repository is highly sensitive and should not ever be copied onto your local machine or to anywhere outside of a puppetmaster/puppetserver system.

Nowadays, most things in the private repo should be class parameters defined with Puppet Hiera. Those reside under private/hieradata and have the big advantage they don't need to get replicated in a second repository (see below).

Public (fake) private puppet repo

In order to satisfy puppet dependencies while retaining security, there is also a 'labs private' repo which the labs puppetmaster uses in place of the actual, secure private repo. The labs private repo lives on Gerrit and consists mainly of disposable keys and dummy passwords. In the case of hieradata in the private repo, in most cases labs can be happy with class defaults or with some data you can put in labs.yaml in the public hiera repository.

See also how to merge patches to this repo.

Puppet CA

The server hosting the private git repo (see above) also hosts the Puppet CA (certificate authority) and runs puppet-master in "CA mode", in other words the endpoint that agents use to ask for signatures and obtain their certs. Also the host where puppet cert commands are able to operate.

Failover

When doing maintenance on the puppet CA host it is necessary to failover the CA onto another puppetmaster frontend. Make sure the change is announced a little ahead of time to ops@lists.wikimedia.org to give folks the heads up.

- Disable puppet across the fleet cumin -p 95 -b 100 '*' "disable-puppet 'temporarily disabled for puppet ca relocation - USERNAME'"

- Ensure rsync/git (ca, private and volatile) destinations are up to date on the destination frontend.

- /var/lib/puppet/server/ssl/ca

- /var/lib/puppet/volatile (Puppet 5) or /srv/puppet_fileserver/volatile (Puppet 7)

- /srv/private/

- Make backup copies of /var/lib/puppet in case of disaster

- Flip puppetmaster::ca_server in puppet to point to the new host.

- Enable puppet on the old CA host, verify apache config now ProxyPass to the new host.

- Enable puppet on the new CA host, verify puppet-master can start and serve requests.

- Run puppet agent on a few canary hosts to check all is well.

- Reenable puppet across the fleet cumin -p 70 -b 100 '*' "enable-puppet 'temporarily disabled for puppet ca relocation - USER'"

See also bug T189891 for a task detailing a puppet CA failover.

Renew agent certificate

Agent certificates by default last for 5 years as such any servers that last that long will need the puppet agent certificate renewed. use the sre.puppet.renew-cert to renew the certificate

$ sudo cookbook sre.puppet.renew-cert sretest1002.eqiad.wmnet

sretest1001.eqiad.wmnet

START - Cookbook sre.puppet.renew-cert

Scheduling downtime on Icinga server alert1001.wikimedia.org for hosts: sretest1001.eqiad.wmnet

Disabling Puppet with reason "Renew puppet certificate - jbond@cumin1001" on 1 hosts: sretest1001.eqiad.wmnet

Deleting local Puppet certificate on 1 hosts: sretest1001.eqiad.wmnet

Generating a new Puppet certificate on 1 hosts: sretest1001.eqiad.wmnet

Generated CSR for host sretest1001.eqiad.wmnet: F3:2C:B6:79:E1:BE:FB:4B:56:7E:EA:84:3E:0B:6A:FC:F9:D5:49:EE:86:8D:F9:7F:D5:53:33:BA:2B:9F:83:37

Signing CSR for sretest1001.eqiad.wmnet with fingerprint F3:2C:B6:79:E1:BE:FB:4B:56:7E:EA:84:3E:0B:6A:FC:F9:D5:49:EE:86:8D:F9:7F:D5:53:33:BA:2B:9F:83:37

Running Puppet with args --enable "Renew puppet certificate - jbond@cumin1001" --quiet on 1 hosts: sretest1001.eqiad.wmnet

END (PASS) - Cookbook sre.puppet.renew-cert (exit_code=0)

If you reached this page from Icinga and you want to know the list of hosts to renew, you have two ways:

- Click on the Icinga alert to expand it and you should see the complete message, containing the hosts list.

- Execute the Nagios check directly on the puppetmaster (something like

/usr/local/lib/nagios/plugins/nrpe_check_puppetca_expired_certs /var/lib/puppet/server/ssl/ca/signed 2419200 604800, but check the actual/up-to-date values on puppet first).